OPEN RESOURCES

Start Learning With WeCloudOpen

WeCloudOpen is here to help you unlock your full potential in tech, with our free courses and workshop. Learn the fundamentals of coding and data, and become a proficient tech professional in no time!

WeCloudOpen Course

Our comprehensive courses on Python and SQL are the perfect way to start your journey into the world of tech. WeCloudOpen ensures you learn the basics without any hassles

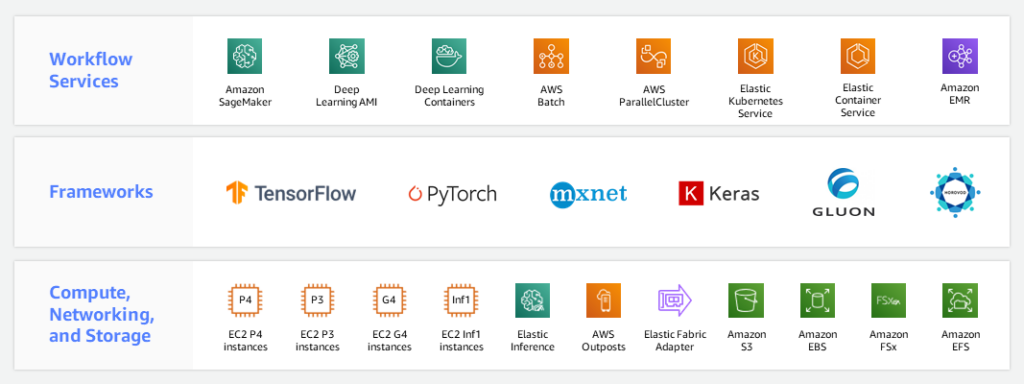

WeCloudOpen Workshop

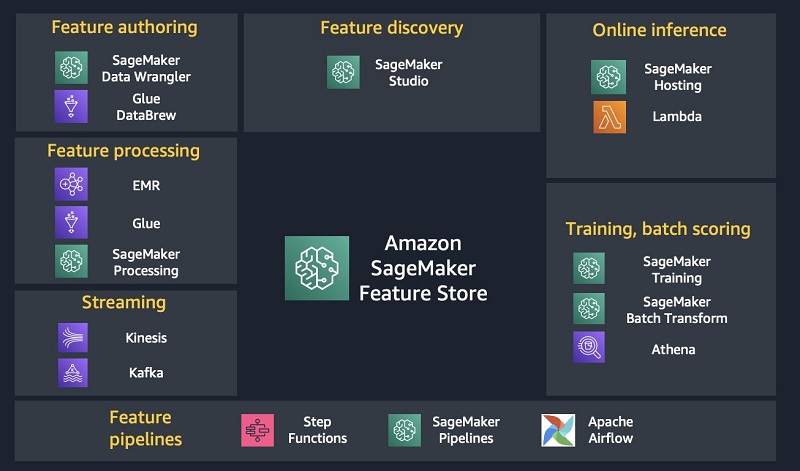

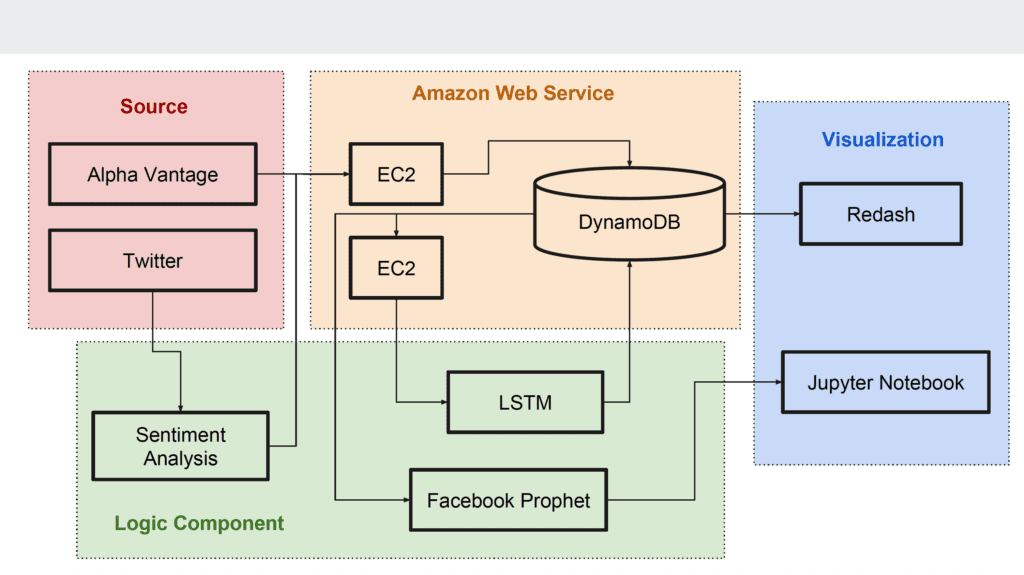

Our free workshops offer topics like Business Intelligence, Data Science, Data Engineering, DevOps, Machine Learning – allowing you to get a head start in tech career