When most developers think about artificial intelligence, they immediately consider algorithms, models, and data. However, anyone who has attempted to build an AI-powered application in the real world will tell you that the toughest part isn’t always the modeling. It’s about getting the environment right.

Framework versions, CUDA dependencies, and conflicting Python libraries can all cause problems. Every small change has the potential to disrupt your workflow.

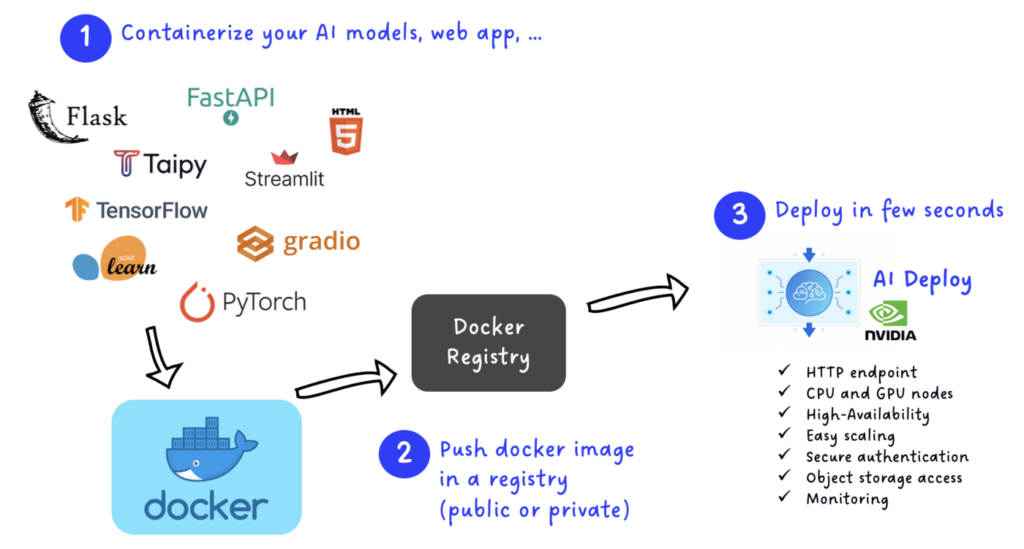

This is where Docker comes in. It’s a powerful tool that brings portability, consistency, and scalability to AI workflows and makes it easy for building AI App with Docker. With Docker, your models and applications can run consistently across laptops, cloud servers, and production environments without the hassle of dependency issues.

In this blog, we will explore how building Docker AI app simplifies development, from local testing to production deployment. We also have an upcoming exciting event from WeCloudData on the topic. Continue reading, and you’ll find out at the end.

Follow this link to get the basic understanding of what Docker is and how it works.

Why Use Docker for AI?

Before we get into details, let’s ground ourselves in why Docker has become a cornerstone in AI workflows.

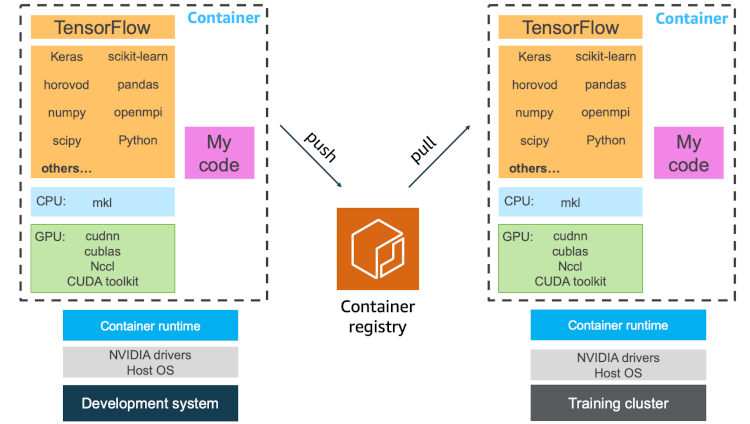

Reproducibility and Consistency

Docker bundles all dependencies, including framework versions, libraries, and system tools. This means your AI application behaves consistently across all machines. You won’t have to worry about unexpected issues like “it works on my machine.”

Performance with Minimal Overhead

Research shows that Docker adds very little performance overhead, even for deep learning tasks that use GPUs.

Environment Isolation and Security

Using containers helps separate AI workloads. This limits risks from faulty code or conflicting dependencies, providing a more secure and stable environment.

Community Ecosystem

Prebuilt images for frameworks like TensorFlow, PyTorch, and Hugging Face models are widely available.

Key Docker Tools and Features for AI

Docker Model Runner

Docker’s Model Runner allows you to run AI models locally with your existing Docker workflows, requiring no additional tools. You can pull supported models from Docker Hub or Hugging Face as OCI artifacts and run them with native GPU acceleration.

Docker Compose and Docker Offload

The Docker Compose helps you manage multilayer applications by combining AI services, model runners, and monitoring tools in one configuration. Docker Offload enables you to use remote GPU-enabled hosts, which is excellent for large-scale experiments.

Model Context Protocol (MCP)

The MCP creates a standard method for AI models to work with external data and services. Docker containers make it easier to package MCP servers for consistent performance across different architectures.

Use Cases: How Docker Powers Real AI Applications

1. Chatbots and Conversational AI

Containers let you run LLMs locally and experiment with different frameworks like LangChain and RAG. You can also deploy them as microservices. Docker Compose makes it easy to combine the model, backend, and frontend into one app.

2. Computer Vision for Manufacturing

Factories use Docker to deploy vision models on various edge devices. Each device runs the same container, so updates and bug fixes spread quickly.

3. Fraud Detection in Finance

Banks package fraud detection pipelines in containers to ensure that data preprocessing, model inference, and monitoring take place in controlled settings. This cuts downtime and boosts compliance.

4. Recommendation Engines in Retail

E-commerce platforms deliver recommendation models as Docker images. This allows for easy A/B testing of new models by running new containers alongside the old ones.

5. Hobbyist AI Projects

From AI art generators to smart home assistants, Docker helps people skip the hassle of setting up environments and focus on being creative.

A Step-by-Step Guide: Sample AI App with Docker Model Runner

Here’s a blueprint for building a local AI chatbot using Docker Model Runner, inspired by Docker’s guides.

1. Prep Your System

- Install Docker Desktop (v4.40+)

- Enable Docker Model Runner under Experimental Features in settings Docker+2Docker+2

2. Pull an AI Model

Run this code.

‘docker model pull ai/llama3.2:1B-Q8_0’

2.1 Define Your App Architecture

Frontend: React/TypeScript UI for streaming responses

Backend: Python server to communicate with Model Runner

Observability: Add Prometheus, Grafana, and Jaeger for metrics and tracing.

2.2 Write Docker Compose File

Use Compose to spin up:

- Model Runner

- Backend API

- Frontend

- Monitoring tools

2.3 Build and Run Locally

Once your services are defined:

‘docker compose up’

Backend interacts with Model Runner; frontend streams responses; monitoring tracks everything.

2.4 Push to Production

You can easily push to cloud platforms using Compose + Docker Offload or direct cloud integrations.

Why learning about Docker Matters Now

Across industries, companies are realizing that building AI isn’t just about better models, it’s about better environments. Without Docker, AI development is brittle and inconsistent. With Docker, it becomes repeatable, portable, and production-ready.

And this is exactly the theme of our upcoming session.

Join Us Live: Getting Started with Local AI Development

On September 4, 2025, at 7:00 PM Eastern, WeCloudData is hosting a live event:

- Topic: Getting started with local AI development

- Speaker: Mike Coleman, Staff Solutions Architect at Docker

- Why Attend: Learn how to use Docker tools to simplify AI development, run models locally, and scale them into the cloud.

Mike has spent years at Google Cloud, AWS, VMware, and Microsoft, helping developers tackle the very challenges we covered here. At Docker, he now focuses on helping teams confidently adopt container-based AI workflows.

Fun fact: Mike builds animated holiday light shows featuring over 32,000 lights synchronized to more than two dozen songs.

If you want to move from “works on my machine” to AI apps that scale in the real world, don’t miss this session. Reserve your spot and learn from a Docker insider.

Learn with WeCloudData

Enroll in our Introduction to Docker course and start mastering Docker today! Or if you are an organization looking to upskill its employees, we recommend that you explore our corporate AI upskilling program.

What WeCloudData Offers

- Career-Focused Bootcamps: Learn Python, Data Science, Data Engineering, Machine Learning, and AI via our learning tracks.

- WeCloudData’s Corporate Training programs are designed to meet the needs of forward-thinking companies. With hands-on, expert-led instruction, our courses are designed to bridge the skills gap and help your organization thrive in today’s data-driven economy.

- Live public training sessions led by industry experts

- Career workshops to prepare you for the job market

- Dedicated career services

- Portfolio support to help showcase your skills to potential employers.

- Enterprise Clients: Our expert team offers 1-on-1 consultations.

Join WeCloudData to kickstart your learning journey and unlock new career opportunities in Artificial Intelligence.