Machine Learning Engineering is a field that focuses on the development, deployment, and optimization of machine learning systems. It combines principles from software engineering, data science, and statistics to design and implement effective machine learning solutions. Machine Learning Engineering encompasses several domains, including:

1. Natural Language Processing (NLP):

NLP stands for Natural Language Processing. It is a branch of artificial intelligence (AI) and computational linguistics that focuses on the interaction between computers and human language. NLP aims to enable computers to understand, interpret, and generate human language in a way that is both meaningful and useful.

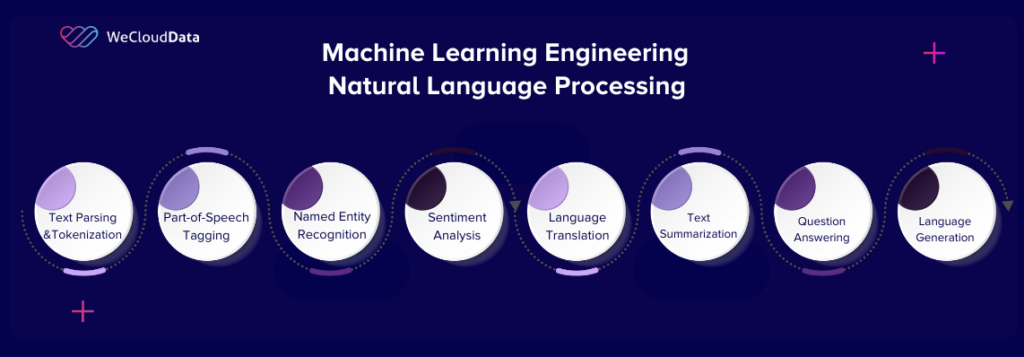

The field of NLP involves various tasks and techniques that deal with language processing, including:

- Text Parsing and Tokenization: Breaking down text into smaller units, such as words or sentences (tokens), to analyze their structure and relationships.

- Part-of-Speech Tagging: Assigning grammatical tags (noun, verb, adjective, etc.) to individual words in a sentence, which helps in understanding the syntactic role of words.

- Named Entity Recognition (NER): Identifying and categorizing named entities such as people, organizations, locations, dates, or other specific entities mentioned in text.

- Sentiment Analysis: Determining the sentiment or emotional tone of a given text, often classifying it as positive, negative, or neutral.

- Language Translation: Translating text from one language to another, enabling communication and understanding across different languages.

- Text Summarization: Generating concise summaries or extracts from longer texts, condensing the main points or key information.

- Question Answering: Developing systems that can understand questions posed in natural language and provide relevant and accurate answers.

- Language Generation: Creating human-like text or generating coherent and contextually relevant responses.

NLP utilizes a variety of techniques, including statistical models, machine learning, deep learning, and linguistic rules, to process and analyze language data. It involves tasks at different levels of linguistic analysis, ranging from low-level tokenization and syntactic parsing to higher-level semantic understanding and discourse analysis.

NLP has numerous applications in areas such as information retrieval, chatbots and virtual assistants, sentiment analysis, machine translation, content recommendation, text mining, customer support, voice assistants, and more. It plays a crucial role in enabling computers to interact with and understand human language, bridging the gap between humans and machines in terms of communication and comprehension.

2. Computer Vision

Computer Vision is a field of study and technology that focuses on enabling computers to understand and interpret visual information from images or videos. It aims to replicate human visual perception and comprehension by developing algorithms and systems that can extract meaningful insights and make decisions based on visual data.

The main goal of computer vision is to enable machines to automatically analyze, understand, and interpret visual content, similar to how humans do. This involves tasks such as image classification, object detection, image segmentation, facial recognition, pose estimation, scene understanding, and more.

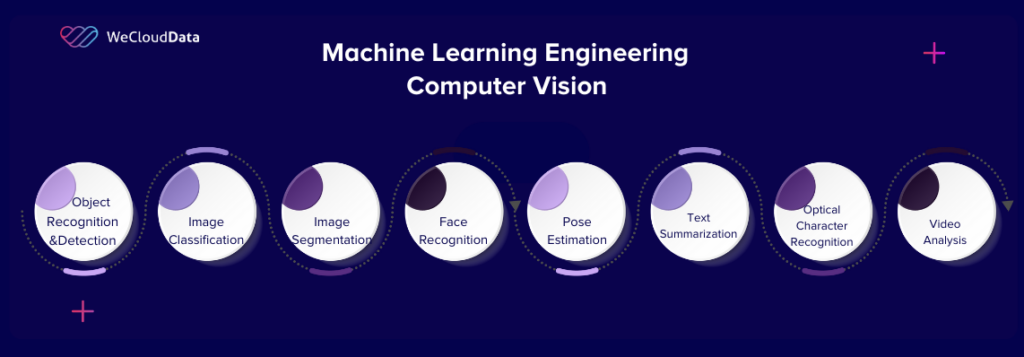

Computer vision encompasses various domains and applications. Here are some of the major domains within computer vision:

- Object Recognition and Detection: Identifying and localizing objects within images or videos. This includes tasks such as object classification (assigning labels to objects), object detection (identifying and locating multiple objects), and instance segmentation (segmenting and identifying individual instances of objects).

- Image Classification: Assigning labels or categories to whole images based on their content. This involves training models to recognize and classify images into predefined classes, such as identifying whether an image contains a cat or a dog.

- Image Segmentation: Dividing an image into regions or segments based on similar properties, such as color, texture, or shape. This allows for a more detailed understanding of the image’s content and can be used for tasks like object separation, semantic segmentation, or medical image analysis.

- Face Recognition: Identifying and verifying individuals based on their facial features. Face recognition systems analyze facial patterns and compare them against known face templates or databases to recognize individuals.

- Pose Estimation: Determining the 3D position and orientation of objects or human body parts within images or videos. This is useful for applications such as motion capture, robotics, virtual reality, and augmented reality.

- Scene Understanding: Inferring higher-level information about the content and context of a scene. This involves tasks like scene classification (e.g., recognizing indoor vs. outdoor scenes), scene parsing (segmenting a scene into its constituent objects and regions), or understanding the spatial relationships between objects in a scene.

- Optical Character Recognition (OCR): Extracting text from images or documents. OCR enables the conversion of printed or handwritten text into machine-readable formats, allowing for text analysis, document indexing, and more.

- Video Analysis: Analyzing and interpreting videos to extract information and detect patterns. This includes tasks such as action recognition (identifying and classifying human activities in videos), video tracking (following and locating objects across frames), and video summarization (creating condensed representations of longer videos).

Computer vision finds applications in various fields, including autonomous vehicles, surveillance systems, medical imaging, robotics, augmented reality, quality control in manufacturing, image and video search, and many more. It plays a crucial role in enabling machines to interact with and understand the visual world, opening up numerous possibilities for automation, analysis, and decision-making based on visual data.

3. MLOPS

MLOPS stands for Machine Learning Operations, also known as ML Operations or simply MLOps. It refers to the practices and techniques used to manage and operationalize machine learning systems in production environments. MLOps combines aspects of machine learning, data engineering, and software engineering to ensure the successful deployment, monitoring, and maintenance of machine learning models.

The goal of MLOps is to bridge the gap between data scientists, who develop and train machine learning models, and the operations teams responsible for deploying and managing these models in real-world applications. MLOps provides a set of principles, tools, and processes to streamline the development and deployment of machine learning models, making the entire lifecycle more efficient, reliable, and scalable.

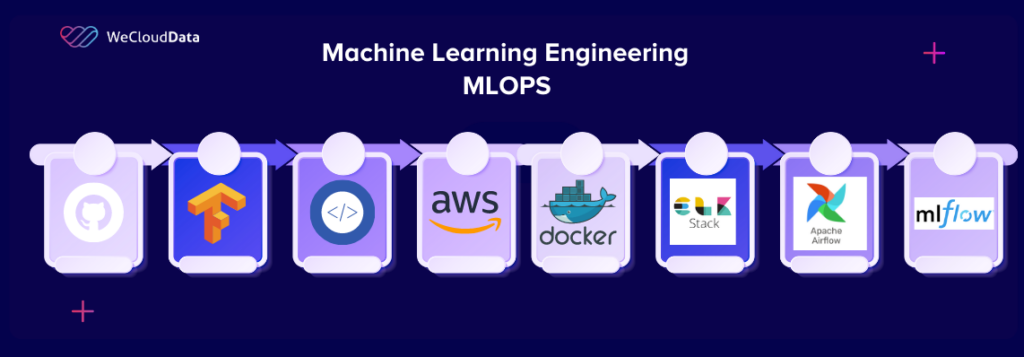

There are several tools available for implementing MLOps (Machine Learning Operations) practices. Here are some popular MLOps tools commonly used in the industry:

- Version Control Systems:

- Git: A widely used distributed version control system for tracking changes in code, data, and configurations.

- GitHub: A platform built around Git that provides additional collaboration and project management features.

- Continuous Integration/Continuous Deployment (CI/CD) Tools:

- Jenkins: An open-source automation server that facilitates continuous integration and continuous deployment pipelines.

- CircleCI: A cloud-based CI/CD platform that allows automated building, testing, and deployment of software applications.

- GitLab CI/CD: Integrated CI/CD capabilities provided by GitLab, enabling end-to-end automation and deployment pipelines.

- Containerization and Orchestration:

- Docker: A platform that allows packaging applications and their dependencies into containers, ensuring consistent and reproducible deployment environments.

- Kubernetes: An open-source container orchestration platform for automating the deployment, scaling, and management of containerized applications.

- Model Training and Deployment:

- TensorFlow: An open-source machine learning framework that provides tools and libraries for building and deploying ML models.

- PyTorch: A popular deep learning framework that offers flexibility and ease of use in building and deploying ML models.

- Kubeflow: An open-source platform built on Kubernetes for developing, deploying, and managing ML workflows and models.

- Monitoring and Logging:

- Prometheus: An open-source monitoring and alerting toolkit that collects metrics and provides insights into system performance.

- Grafana: A visualization and monitoring tool that integrates with various data sources, including Prometheus, to create interactive dashboards.

- ELK Stack (Elasticsearch, Logstash, Kibana): A combination of open-source tools used for centralized logging, log analysis, and visualization.

- Workflow Automation:

- Apache Airflow: An open-source platform to programmatically schedule, monitor, and orchestrate complex data pipelines and workflows.

- Luigi: A Python-based framework for building and managing data pipeline workflows.

- Model Registry and Versioning:

- MLflow: An open-source platform for managing the ML model lifecycle, including experiment tracking, model versioning, and deployment.

- Cloud Services:

- Amazon SageMaker: A fully managed service provided by AWS for building, training, and deploying ML models at scale.

- Azure Machine Learning: A cloud-based service provided by Microsoft Azure that supports the entire ML lifecycle, from data preparation to model deployment.

- Google Cloud AI Platform: A set of cloud-based tools and services offered by Google Cloud for developing, training, and deploying ML models.

These are just a few examples of the many tools available for implementing MLOps. The choice of tools depends on the specific requirements, infrastructure, and preferences of the organization or project.

By adopting MLOps practices, organizations can accelerate the development and deployment of machine learning models, improve their reliability, and reduce the time-to-market for AI-driven applications.