What are portfolio projects?

A portfolio is a collection of projects, code, document, and other things that can help you showcase your skills. These usually go beyond degrees and certifications and show practical skills that the candidates have obtained.

A decent portfolio project usually take more effort than most learners would expect. It’s more than just uploading your code to github. Data engineering projects are not as easy to demonstrate as compared to a data science project that has more visual components.

Below is a list of things aspiring data engineers can work on to strength their profile.

- Personal Projects

- Project summary

- Data pipeline architecture diagrams

- Data infrastructure configuration templates/scripts

- Working code and scripts (ETL/ELT scripts, pipeline DAGs, etc.)

- Github

- Code committed and pushed to your github branches that employers can look into

- Blogs

- Blog posts that document your learning journey and your views on the data engineering world. We suggest Medium/Substack as the blogging platform or WordPress. You can also set up your own Github pages.

WeCloudData’s suggestion

- Portfolio is extremely important because job search is usually a chicken and egg problem. It’s somewhat hard for applicants to show work related experiences without actually working in a DE role. Therefore, one of the best ways to showcase a career switcher’s background and skills is the project!

- Blog posts and Github can be used as great tools for networking and LinkedIn cold calling

- Portfolio projects not only demonstrate your skills but also show the hiring team how serious you’re and the level of effort you’ve put in

Building End-to-End DE Projects

Traditional data engineers in big companies may just need to focus on very specific tasks. For example, as an ETL specialist the DE may work with Informatica to transform and prepare data in the Informatica environment. The job market is increasingly looking for candidates who know more tools and design patterns. Especially in startups and tech companies that build their infrastructure on public clouds, data engineers have access to an abundant amount of tools and they either need to know how to work with a lot of those tools, or have to learn very quickly.

Therefore, WeCloudData suggests that when learning data engineering and building your portfolio projects, it’s very important to build projects that are end-to-end.

What does end-to-end project mean? An end-to-end project covers the entire data pipeline, from data collection, ingestion, to cleaning/transformation (ETL/ELT), loading into data lake/warehouse, and reverse ETL. It will be challenging to build something of this scope for a learner but try to build a pipeline that’s as complex and complete as possible.

Only showing code/scripts of processing data for ETL is not going to give candidates a huge advantage in the job search process.

Keep in mind that hiring managers always want to know the following:

- What problem are you trying to solve?

- What tools and platforms did you use?

- Why and how did you make some of the decisions on some tools and approaches

- What benefits did you approach have? Was it trying to optimizing the speed, or lowering the infrastructure cost, or improving the reliability?

Importance of Data Architecture and Demo

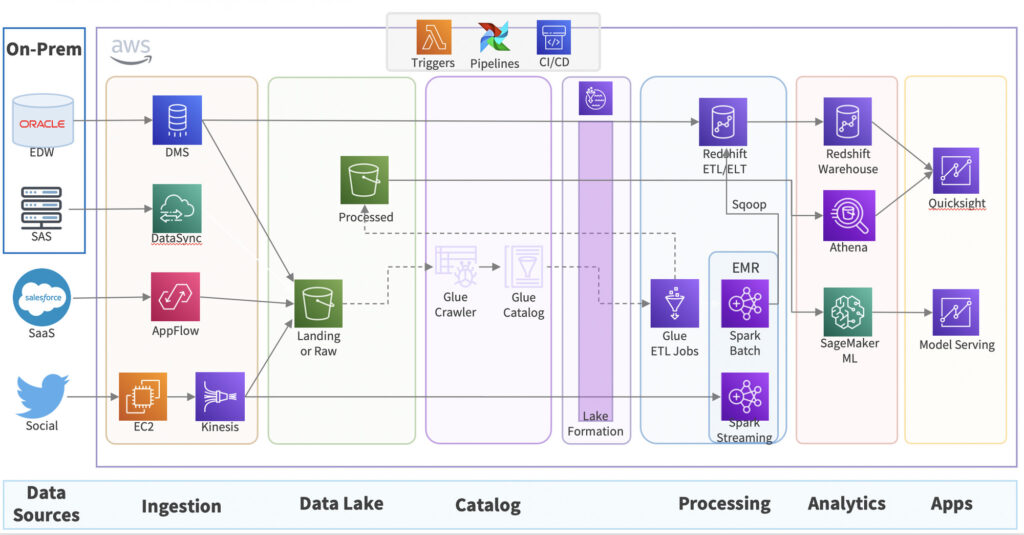

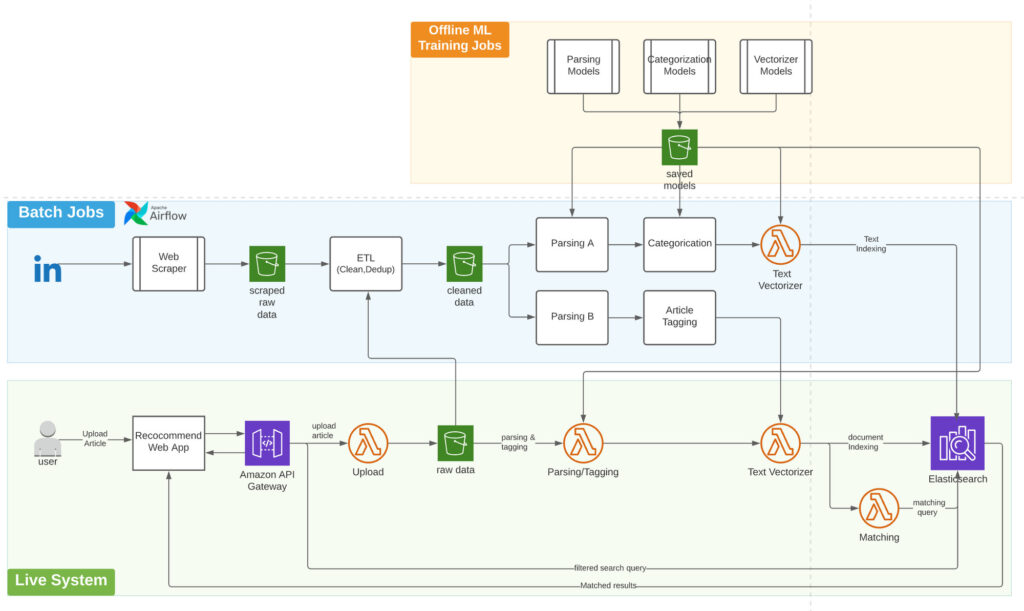

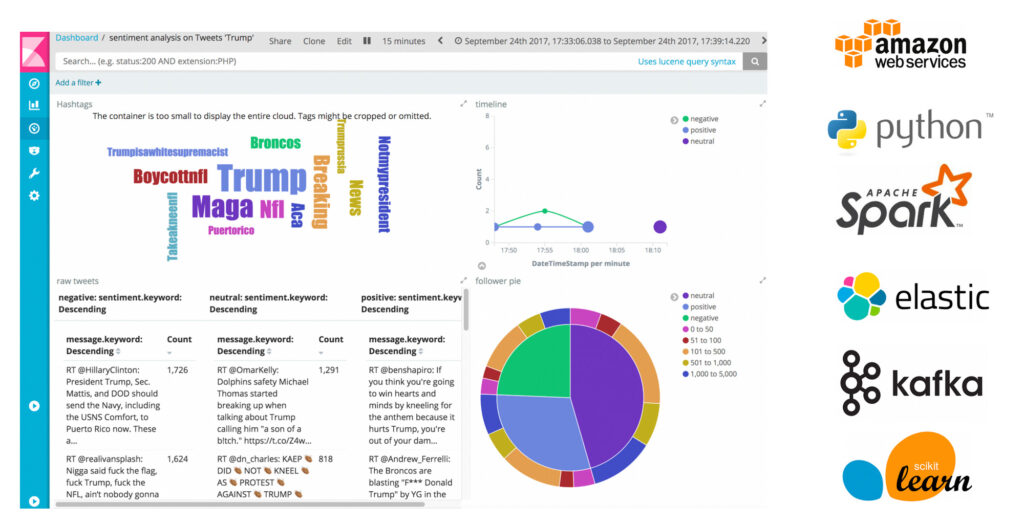

It’s very hard to explain the data pipeline and the best way is to create your pipeline diagram. Data engineers need to know how to work with diagraming tools such as draw.io or lucidchart to design pipeline/data architectures. It doesn’t have to be the most professional looking diagram but some key components need to be articulated. This will help the hiring managers understand what you did and leave a very strong impression. It can also be used as a potential tool during an interview for you to walk the hiring managers and senior data engineers through your project.

Things to include on a DE project diagram

- Data sources

- Data destinations

- Data store (databases, data warehouse, or data lake)

- Data ingestion process (CDC, Kafka, or airbyte/fivetran, etc.)

- Staging vs production zones

- Critical logics in your pipelines

- Automation workflow

- Environment/Infrastructure (AWS, Kubernetes, Spark Clusters, etc.)

- Analytics output if possible (BI dashboards)

Importance of real project experience

Nothing beats real hands-on experience! Hiring managers will always prefer to hire someone who has real experiences. Real experience doesn’t mean work experience, it could be self-guided projects, freelance projects, or some kind of real project experience working with clients.

In this section, we will discuss why having real project experience can help you stand out easily. Here’re some of the benefits of real client projects:

- You will gain experience working with real companies and clients so your work can be articulated in interviews and helps you build more credibility

- You will get more interviews because of the real experience

- You will be able to negotiate for higher starting salary

- For career switchers, real projects help you close the experience gap.

Let’s try to understand the important factors in hiring decisions:

- Hiring managers want to hire someone who has the right skills

- Hiring managers want to hire someone who has good track record so they have more certainty in the hiring decision

- Hiring managers want to hire someone who is at lower risks

- It’s not about how MANY tools a candidate knows

- It’s not about how MANY years of experience a candidate has

- It’s more about experience which is related to problem solving skills

For career switchers coming from non-data and non-tech background, your past work experience doesn’t carry much weight and often times creates disadvantages. It’s pretty easy for hiring managers to make biased assumptions that you’re not a good fit without even looking into your skills and capabilities. Working on real client projects means the work you do will carry more weight on your resume and help build more trust. Employers will have more confidence when they are deciding among different candidates. You will also learn things that you don’t have the opportunities to practice yourself:

- Project scoping and requirements gathering

- Business communications

- Task prioritization

- Debugging, solving technical challenges, handling messy situations, and learning from mistakes

- Associating your work with measurable business values

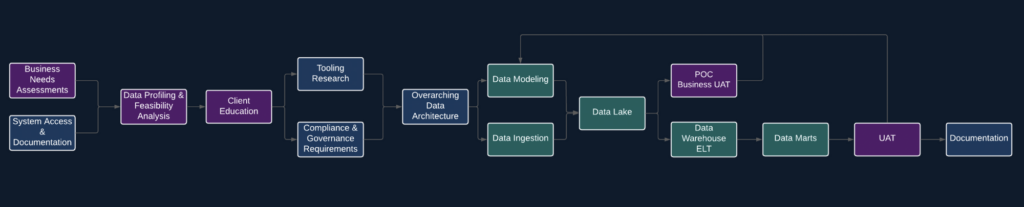

Understanding how real-life projects work

Here’s a sample data warehousing project workflow.

A project team is tasked with creating a modern data warehouse in the Azure cloud. The project managers, data engineers, IT/platform engineers need to work together to make the following things happen for the company/client:

- Understand the business needs of a data warehouse

- Get IT infrastructure access sorted out

- Perform data profiling and assess the feasibility/scope of the work involved

- Decide the tools/platforms to use for the data warehouse project

- Work with compliance team to make sure best practices are followed

- Design the overarching data architecture

- Create the data models (dimension models, wide tables, etc.)

- Ingest the data from different sources to the data lake or staging database

- Write ELT/ETL scripts to materialize the tables in data warehouse

- Run UAT sessions with the business users along the way

- Create wide tables and data marts and build dashboards for final UAT and approval

- Document the entire process for the company/client

Sample Data Engineering Portfolio Projects

Do know where to get started from? Get some inspiration from WeCloudData students/alumni. You can find some project demos on our youtube channel: https://youtube.com/@weclouddata